Originally posted on November 13, 2017 @ 6:40 AM

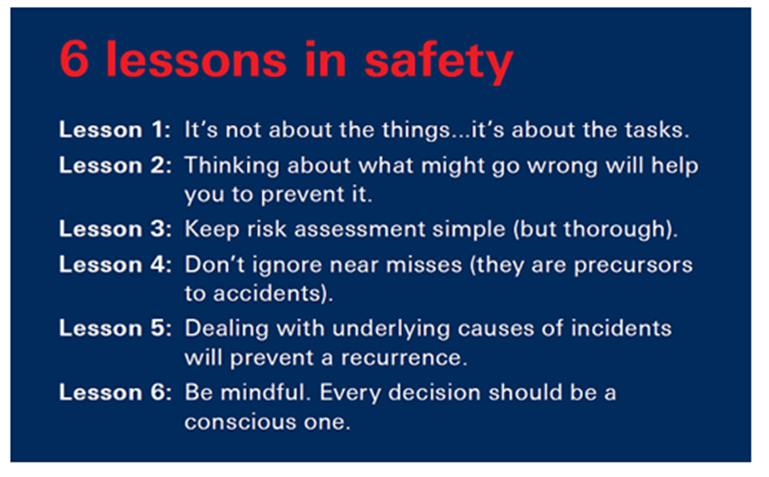

I read with interest the ‘Safer Communities: Six Lessons in Health and Safety’ from the British Safety Council. The assumptions and hidden agenda of Lessons 1, 4, 5 and 6 are most concerning and demonstrate many of the problems associated with dumb down Safety. Here are the 6 lessons as a reminder:

Apparently these are a way of helping a local community to be safe in 90 minutes. At best they are an indoctrination tool to more deeply inculcate a warped ethic of safety. Let’s have a quick review of these ‘lessons’ and see what they really teach.

Lesson 1: Amazing how Safety cannot shift its thinking to subjects and can only relate to objects. So of course safety is not about ‘things’ but neither is it about tasks. The fundamental ethic of safety is about people and helping people tackle risk in undertaking tasks and engaging with things. This subtle variation in emphasis makes all the difference. As it is, this lesson moves thinking from objects to objects – dumb.

Lesson 2: Thinking about what can go wrong is a good exercise in imagination and creativity but it doesn’t in itself help prevent accidents. Anxiety about what can go wrong also has to be balanced by positivity about what can go right (to borrow from the Safety Differently mantra). If we are always directed by thoughts of what could go wrong we wouldn’t have enough faith to embrace any uncertainty or do anything. Who gets up in the morning and doesn’t drive to work because of the worst that could happen? So, don’t go to work. There is a further problem too with this lesson. Unfortunately, Safety by its mechanistic assumptions, actually mitigates against imagination, discovery and creativity fostered by the anxiety of zero – dumb.

Lesson 3: Risk assessments should be brief but not necessarily simple. Being simple and brief are not the same thing. If what is meant by simple is less paperwork, then this is a good lesson. However, no risk is simple and the message should not foster the idea that simple is good rather thinking about by-products and trade-offs in decision making should be the emphasis rather than simplicity – dumb.

Lesson 4: Near misses are lessons in learning not necessarily precursors to accidents. This is an assumption of the Heinrich myth. Causation is neither linear nor based upon the nature of a near miss, people ought to be more focused on the random nature of decision making than near miss as a precursor to accidents. The more we think of causation in randomness and as a wicked problem, the better we will be able to tackle risk in the workplace. Again, the Heinrich myth drives such thinking and then we end up counting and reporting on near misses rather than focus on learning – dumb.

Lesson 5: Underlying causes and the idea of finding underlying causation is also a problem associated with traditional mechanistic safety. Seeking root cause is often an unhelpful way to think about causality (https://vimeo.com/167228715). Most incidents are rarely a replication of an old incident, life is far more random, we rarely achieve goals, strategies rarely work and circumstance is less predictable that we like to think – dumb.

Lesson 6: This is the worst lesson of all six. The human unconscious is not the enemy and it is impossible to be human and fast or efficient at work if decision making has to be rational and conscious. Neither should ‘mindfulness’ be confused with consciousness, does this mean when the unconscious is in control humans are not ‘mindful’? It is impossible for humans to make conscious decisions in what they do, this is why humans develop heuristics and undertake many decisions in automaticity. Most of the time our heuristics and automaticity keeps us safe, the unconscious is not the enemy – dumb.

Six Better Lessons in Safety

So what should be the emphasis to help keep people safe? The focus should be on:

Lesson 1: People not process.

Lesson 2: Helping not heroes.

Lesson 3: By-products not bureaucracy.

Lesson 4: Conversation not counting.

Lesson 5: Relationships not reductionism.

Lesson 6: Culture not consciousness.

21. ROOT CAUSE from Human Dymensions on Vimeo.

Do you have any thoughts? Please share them below