Originally posted on May 26, 2015 @ 9:31 PM

In Praise of In-Between Thinking in Risk and Safety

As much as Kahneman’s book Thinking Fast and Slow is helpful, it also sustains the idea that the human mind operates in a binary paradigm. As much as Ariely’s book Logically Irrational helps people understand the nature of human decision making, it conveys the idea that non-rational thinking is irrational. There is no doubt that Deci’s self determination theory is helpful in understanding motivation but it is built on dualist poles (competence and autonomy) and is limited in capturing variability across context. Unfortunately, many things in the world are not black and white, they are not simple and, wishing in denial that humans were not complex doesn’t change reality.

As much as Kahneman’s book Thinking Fast and Slow is helpful, it also sustains the idea that the human mind operates in a binary paradigm. As much as Ariely’s book Logically Irrational helps people understand the nature of human decision making, it conveys the idea that non-rational thinking is irrational. There is no doubt that Deci’s self determination theory is helpful in understanding motivation but it is built on dualist poles (competence and autonomy) and is limited in capturing variability across context. Unfortunately, many things in the world are not black and white, they are not simple and, wishing in denial that humans were not complex doesn’t change reality.

A binary paradigm sees only one choice, its either zero or non-zero, you want no injury or want people hurt. A binary paradigm just wants wrong and right, there can be no in-between. Rather than question the binary paradigm itself, the binary paradigm of zero gives no other option for variability or in-between. Binary thinking sees no solution in the in-between, regardless of the fact that many problems are ‘wicked’ (intractable and insolvable). As much as we may desire absolute solutions, in many cases there are none. Sometimes there can be improvements, developments, learnings and maturity but in the human world the wish for perfection and absolutes is disconnected, delusional and fanciful. Knowing this to be true is a simple mix and match exercise between the fallibility and uncertainty of living and the claim for absolutes. We know that talk about absolutes in the fallible world alienates humans. We know that talk about perfectionism, no mistakes and no risk is nonsense. Such talk always seems good for other people but is not intended to be applied to the perfect world of the binary speaker. We can sprout forth about certain decisions in risk but in reality the big decisions in life such as marriage, mortgage and vocation are undertaken in faith by belief, there is no certainty.

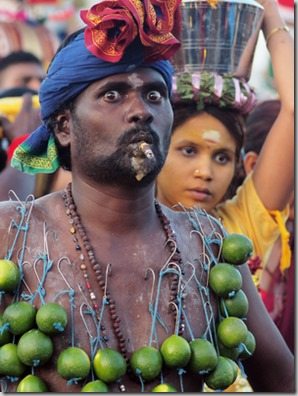

When we look at what people believe (a critical element in culture) we often observe beliefs that do not make sense to us. In the binary world there are just rational people and idiots, in the real world there are many in-betweens. For example, when we observe people in their religious beliefs express their faith in a number of ways what is our response? If we are not religious, does that make religious people idiots? How does prayer make sense to a deity? Just because we don’t understand the religious world of faith-belief doesn’t mean their belief or practice is stupid or irrational. Rather, many beliefs and values in culture are non-rational (or aRational) that is, they cannot be tested for validity on a cognitive, logical or rational basis. You may wish to try and invalidate a faith-belief by rational means but will soon discover that this doesn’t shift the belief and sometimes even strengthens the belief. Understanding cognitive dissonance is important in this respect. Using one paradigm (rationality) to judge another paradigm (arationality) is like trying to measure the weight of a cloud with a spectrometer. This is where the binary construct creates problems. There is plenty of grey between black and white but binary thinking cannot see it. The human mind has plenty of in-between between fast and slow, there is plenty of belief that is non-rational (arational), even in out feeling we can be loving, hateful or in-between (apathea).

Belief is an elusive thing, even when someone tells us they believe something, like they desire or love something, we then look at their behavior and see evidence that they don’t believe it. This is one of the great challenges in addressing issues of culture at work, there is often a massive gap between espoused-belief and theory-in-use. Without some method of capturing implicit knowledge (such is used in the MiProfile survey) there is not much chance that an organisation will be able to measure congruence between espoused and practiced belief.

When it comes to belief in organizational culture some see what they believe and others don’t believe until they see. Like the fundamentalist reading the same Bible as the atheist, each believes what they want to believe and no amount of presented evidence makes a difference. There is no in-between or ambiguity in absolutes. You can’t argue a rational construct on an arational paradigm, not solve cultural issues with systems solutions. I have worked in paid positions as a safety advisor in a number of contexts and it is amazing how unpopular it is to tell the boss there is no easy solution. It is even more difficult to argue the case that no decision is the best decision or that a counterintuitive strategy may be more successful than the obvious big stick. It seems when it comes to risk (uncertainty) and safety (managing uncertainty) black and white is most popular. A good read of Thaler and Sunstein’s Nudge is enlightening in this regard.

The reality is with fallible humans that we hold many conflicting beliefs in tension indeed, some societies and philosophies don’t accept contradiction as a test for knowledge validity. For example, in Christian belief there is an acceptance that 3=1, in Buddhist belief one equals many and in current mathematical belief zero equals infinity. The challenge then is not in the information or data itself but in the capability to discern belief and truth. The same is true for belief in culture, risk and safety, absolutes constrain dialogue and learning, perfectionism drives reporting underground and ‘dumb down’ extracts thinking from a population. This is why the discourse (enculturated ideology) of zero is so dangerous, there is no in-between in zero. Zero language in itself tells a worker that listening, dialogue; tolerance and understanding have no place in the organisation.

So what is the locus of human belief in your culture? What is espoused and what is really believed? Does your organisation and leadership have space for the in-between? Is there any ground to the non-rational in understanding risk and safety?

Do you have any thoughts? Please share them below