Having many times, recently, been asked to define culture and risk, I figured all I need to do is to refer people to this cracker from the archives:

A Formula for Safety Culture Failure and Success

I find it fascinating to read many uses of the word ‘culture’ and ‘risk’ that have no complementary reference to people. Even when there is some mention of people, safety culture advocates often focus on ‘behaviours’ or use obscure expression such as ‘human factors’ that lack definition. The same applies when people speak of ‘common sense’ as if such an expression has a defined meaning for everyone.

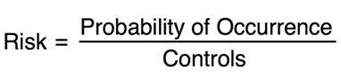

The accepted formula for risk and safety management is often expressed in the following formula:

Whilst the formula seems straightforward there are too many significant issues that it omits. Simplicity is helpful but simplistic formulas are unhelpful. Simplistic formulas are what I term ‘risk quackery’, they give the impression of being genuine and helpful but are misleading.

Risk is not independent of people, especially their psychology and culture. The concept of ‘human factors’ is too obscure, we need to know much more about human judgement and decision making if we are to properly address risk and safety in the workplace.

Here are few specific things we need to know about human judgement and risk:

aRational: The arational dimension of human nature defines all that is not based or governed by reason. This is neither rational nor irrational but non-rational. This includes decisions by emotion, social manipulation and intuition.

Cognitive and Social Biases: There are over 100 cognitive and social biases which affect the way humans make decisions. For example: Fundamental attribution error is when humans overestimate the importance and power of individual personality and underestimate the influence of social situations. This is most often the case when people blame others for being stupid in judgements about risk.

Cognitive Dissonance: Developed by Leon Festinger. Refers to the mental gymnastics required to maintain consistency in the light of contradicting evidence.

Discourse: Developed by Michael Foucault. This is a cultural term and refers to more than language to include the transmission of power in systems of thoughts, ideas and language.

Flooding: Refers to when human senses are flooded beyond the capacity to cope. Flooding drives people to intuition, personal ‘micro-rules’ and heuristic decision making

Heuristics: Mentioned for the first time in HB327 and the hand book that complements AS/NZS 31000, Heuristics refer to experience-based techniques for problem solving, learning, and discovery. Heuristics are like mental short cuts used to speed up the process of finding a satisfactory solution, where an exhaustive search is impractical. Heuristics tend to become internal ‘micro-rules’ or ‘rules of thumb’. For example: trial and error is a heuristic.

Priming: Is an implicit memory effect which influences response. Priming is received in the subconscious and transfers to enactment in the conscious. The way we ‘frame’ messages influences the thinking and decisions of others. This is evident in propaganda and mass movements.

Risk Homeostasis: Developed by Gerald Wilde. Risk homeostasis holds that everyone has his or her own fixed level of acceptable risk.

Sensemaking: Is about paying attention to ambiguity and uncertainty. Developed by Karl E. Weick to represent the seven ways we ‘make sense’ of uncertainty and contradiction.

Scotoma: A scotoma is an area of loss or impairment of visual ability surrounded by a field of normal, well-preserved vision. A blind spot can be physical, psychological and cultural. I have listed more than 20 kinds of blind spots in my book.

Unconscious: Processes of the mind which are not immediately known or made aware to the conscious mind. The term subconscious is also used interchangeably and denotes a state ‘below’ the conscious state. The subconscious is more associated with psychoanalytics.

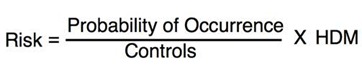

These are just some of the examples of things we should know that influence human judgement and decision making. For the purpose of this discussion let’s call these factors Human Decision Making (HDM).

Anything that involves humans is complex and there is no great advantage in putting one’s heads in the sand. More ‘risk quackery’ doesn’t heal insufficient formulas. So a more realistic formula for risk and safety management should look like this:

Bernard Corden says

I foresee plenty of dollars too

Rob Long says

Hi, it is not intended to be numerical. It really serves as a semiotic about the complexity of risk.

M.Fawzy Hamdallah says

Dear Dr.Rob Long

I enjoyed with this valuable article. Please can you give a numerical example for the above risk equation to know how we can use.

Thank you

Engineer

M.Fawzy